Health Chatbots

Chatbot UX Design And Development from Liverpool

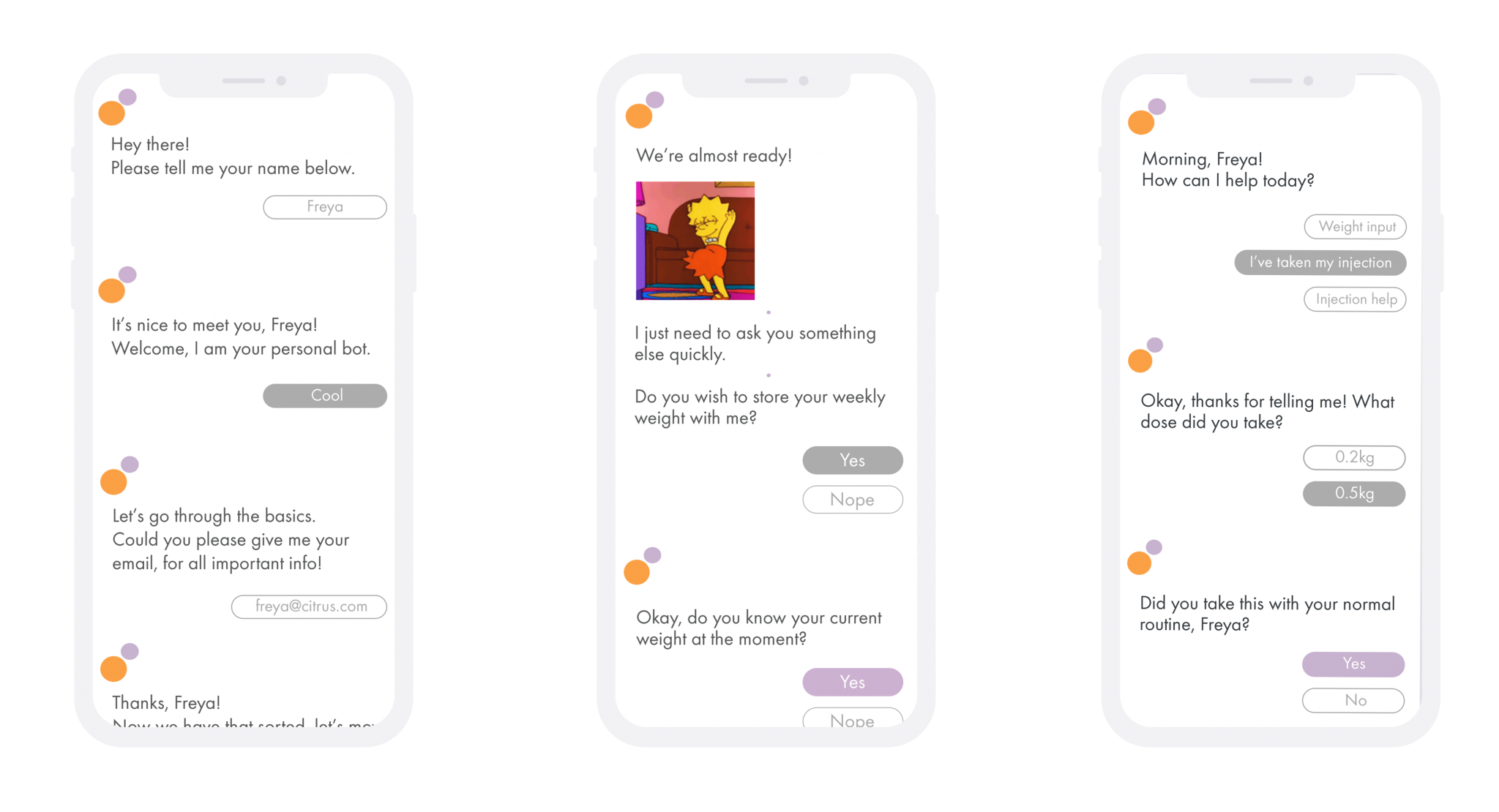

A chatbot built by Citrus Suite has had a global release (confidential, unfortunately), we’ve been building and supporting it from 2017 to the present. Citrus have created iOS & Android apps with interactions that reflect real-life conversation, integrating behavioural science to achieve better patient outcomes; with a web-based control centre for integration of new content and knowledge as it becomes available. The output supports adherence to a newly launched drug; failure to stick to treatment is a serious problem which not only affects the patient but also healthcare systems.

What are chatbots and is healthcare progressive enough to use them?

Citrus Suite’s CEO, Chris Morland: “Chatbots are a logical next step for managing health conditions, and we’ve built a proprietary system for facilitating this, so clinicians can create interactive content via an online portal and this is synchronized with the user’s app. For some patient groups managing a condition or beginning a new medical treatment this digital support can be very effective. With our new chatbot app launched and established across the world, Citrus are excited to be part of an important and evolving area of healthcare.”

The concept of the chatbot is to act as a virtual support for the patient, by incorporating features aid and inform the end user, while giving professionals tools to add and deploy fresh content. With more companies designing solutions involving chatbots, that potentially enhance people’s lives – whether that be by booking a doctor’s appointment, or offering support with a person’s condition, can this advancement really be seen as a negative one? While this is very much an evolving landscape, progressive healthcare organisations are embracing the potential of chatbots.

The initial product has been created to support users with various health conditions, contact us for more information.

Read more on Wikipedia and read our 2018 chatbot blog post here and our full 2018 industry report here.

ChatGPT, Design, Integration, Development from Liverpool

Here are some ideas for how ChatGPT could integrate into digital health products:

- Symptom assessment: ChatGPT can be used to create a chatbot that helps patients assess their symptoms and determine the appropriate course of action. For example, a chatbot could ask patients questions about their symptoms, provide relevant medical advice and recommend whether to seek medical attention.

- Medication adherence: ChatGPT can be used to develop a chatbot that reminds patients to take their medication and answers questions about dosages, interactions and side effects. This can be particularly helpful for patients with chronic conditions who require ongoing treatment.

- Lifestyle coaching: ChatGPT API can be used to create a chatbot that provides patients with personalised lifestyle advice based on their health status and goals. For example, a chatbot could offer exercise tips, healthy eating recommendations and stress-management strategies.

- Mental health support: ChatGPT can be used to develop a chatbot that provides patients with mental health support and resources. For example, a chatbot could offer coping strategies for anxiety and depression, connect patients with mental health professionals and provide information about mental health disorders.

- Patient education: ChatGPT API can be used to create a chatbot that educates patients about their health conditions and treatments. For example, a chatbot could answer questions about medications, provide information about medical procedures and offer guidance on managing symptoms.

- Health professional support: ChatGPT can be used to develop a chatbot that assists health professionals in their work. For example, a chatbot could provide guidance on diagnoses, suggest treatment plans and offer resources for continuing medical education.

These are just a few examples of how ChatGPT can be used in digital health products. The possibilities are endless, and the key is to tailor the chatbot to the specific needs of the patient or health professional.

It is important to note that while the Chat GPT is a powerful tool that can provide valuable support and guidance, it is not infallible and the information it provides should not be taken as a substitute for professional medical advice. It is important for service users to always consult with a qualified healthcare professional before making any decisions related to health. Therefore, it is recommended that any digital health products that utilise the Chat GPT, or its API include a disclaimer stating that the information provided by the chatbot is for informational purposes only, and should not be considered a substitute for medical advice from a qualified healthcare professional. The disclaimer should also state that while the chatbot has been trained on a vast amount of data, it may not always provide accurate information and users should exercise caution and seek professional advice before making any healthcare decisions.

Similar to Health Chatbots:

Start-Up Apps

We can help you elevate your ideas, with a tried and tested innovation processes.